Medical Data Exchange: Standards, Protocols, and Best Practices

You would be shocked to know that healthcare alone generates 30% of the entire world’s data volume. Then, whether it is HL7 messages, FHIR transactions, lab results, patient records, hospitals, or clinics, they are constantly sending and receiving massive volumes of data every day.

Despite all this, up to 97% of the data generated in the healthcare industry still remains unused, according to The World Economic Forum. This is majorly related to data exchange, the challenges related to it. However, what is concerning is that with growing data the challenges are also growing.

For instance, delays, timeouts, and bottlenecks are some of major hindrances to name a few. These inefficiencies are hindering the overall performance of the healthcare systems. On top of that, it is making decision-making a time-consuming process, as the needed data is not readily available when required. This frustrates providers like you, and your healthcare IT teams are continuously under pressure because of this.

These challenges of delays and gaps in care delivery can be solved with a custom EHR integration system, but the reality is, your medical data exchange capability has to be more than functional. Nowadays, data has become the lifeline of care delivery, so EHR integration also needs to be fast and reliable.

That’s where this article comes in to take you through the real-world and practical ways of optimizing high-volume data exchange. We’ll cover things like best practices for message routing, ways to reduce processing time, and how to monitor system health properly.

Furthermore, let’s also have a look at the scalability part and how real-time data exchange across connected systems has become the need for modern healthcare systems.

So, let’s get into how you can keep your data flowing smoothly, even when data volumes feel overwhelming.

What is Medical Data Exchange in Healthcare?

Before getting into the intricacies of healthcare interoperability and how it can help you to improve your system’s performance, here are a few things that you need to know about medical data exchange in healthcare.

So, medical data exchange as the name suggests refers to securely sharing of patient health information between disparate healthcare systems, for care delivery and other related aspects. The data that is usually shared includes medical history, lab results, prescriptions, imaging and clinical notes.

Now, the role of medical data exchange in EHR interoperability is that, it lays the foundations of EHR interoperability. Meaning, it allows different EHR systems to communicate, interpret and use the shared data effectively by using HL7 and FHIR standards.

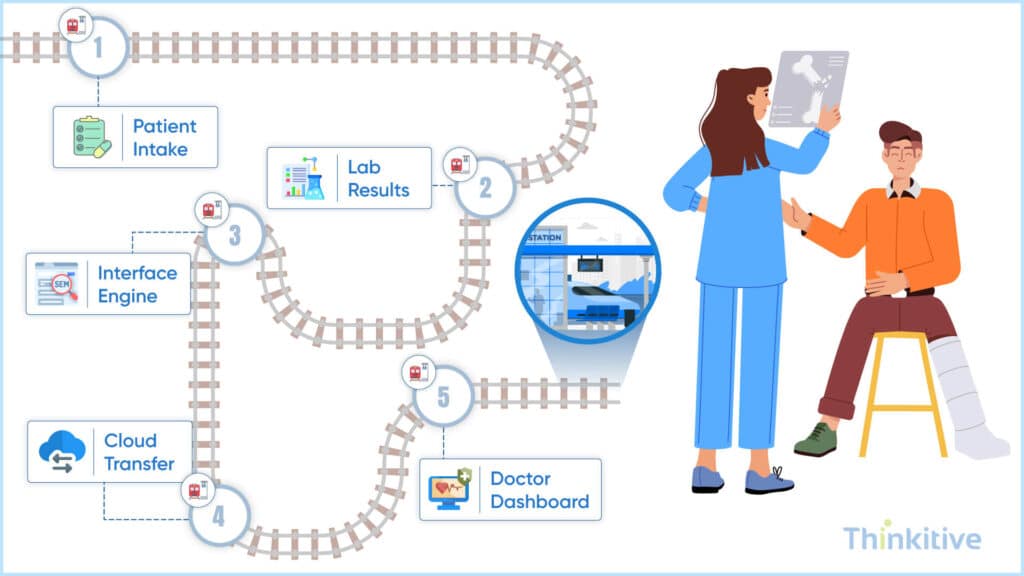

How Medical Data Exchange Works in Healthcare Systems

Waiting for the patient data to load during a busy clinic day is not a pleasant experience. But this happens with most providers, and the reason for this is data throughput issues. These issues happen when your backend system fails to keep pace with modern healthcare demands.

The performance bottlenecks that many healthcare providers face during data exchange typically stem from several key factors. First is your network infrastructure, which might be falling short for today’s high-volume data exchange requirements, especially with the growing amount of medical imaging and genomic data.

Next in line are interface configuration issues that are particularly troublesome in healthcare environments where multiple systems need to communicate seamlessly. This is why not optimizing healthcare interfaces to match each other and perform smoothly makes the medical data processing speed suffer.

So, how do you identify these problems before they affect patient care? Begin by implementing robust performance monitoring tools that can track essential metrics like healthcare data throughput, latency, and error rates. Additionally, defining a baseline for performance gives you a reference point to find any degradation before it becomes critical.

And the impact these bottlenecks have goes beyond technical frustrations. For instance, any delays in clinical workflows extend the patient wait times, increase provider frustration, and possibly compromise care quality.

This is why the EHR performance tuning should not be a one-time project. Instead, it should be a continuous process. So, for creating a plan for ongoing tuning, start by analyzing your present system performance, identifying bottlenecks, and creating a prioritized roadmap for improvements.

Current Cloud and Modern Architecture Performance Approaches

To support high-volume EHR data exchange, healthcare organizations are embracing cloud-native performance strategies offered by AWS (HealthLake), Azure (Health Data Services), and GCP (Healthcare API), optimizing for scalability, redundancy, and regional data compliance. Containerization and Kubernetes help deploy modular, resilient workloads, allowing precise resource scaling for critical healthcare apps.

For latency-sensitive applications, edge computing and CDNs accelerate access to patient data across geographies, especially for remote monitoring and telehealth. Meanwhile, serverless architectures offer elastic compute for event-driven tasks like real-time alerts, FHIR queries, or API processing, reducing idle cost while maintaining responsiveness. Together, these modern approaches offer the performance backbone for future-ready EHR infrastructure.

Another thing that you should know about is real-time vs batch data exchange workflows. This table will help you understand the intricacies in a better way:

| Aspect | Real-Time Data Exchange | Batch Data Exchange |

| Definition | Data is exchanged instantly as events occur | Data is collected and sent at scheduled intervals |

| Speed | Immediate (milliseconds to seconds) | Delayed (minutes, hours, or daily) |

| Use Cases | Emergency care, e-prescriptions, live patient monitoring | Reporting, analytics, billing, bulk data transfers |

| Technology Approach | APIs (often using FHIR), event-driven systems | File-based transfers, bulk APIs, ETL processes |

| System Load | Continuous but distributed load | High load during batch processing windows |

| Complexity | Higher (requires low latency, high availability) | Lower (simpler scheduling and processing) |

| Data Freshness | Always up-to-date | Potentially outdated depending on schedule |

| Error Handling | Needs instant retry/failover mechanisms | Easier to retry entire batch jobs |

| Scalability | Requires robust infrastructure for concurrency | Easier to scale with scheduled processing |

| Example | Real-time sharing of vitals from RPM devices to EHR | Nightly sync of patient records between hospital systems |

Architecture Optimization for Secure Medical Data Exchange

As said above, the healthcare industry generates 30% of the world’s data volume, and this includes documents, medical images, and other high-volume data. And to handle this data and data exchange, having a robust architecture is not just a consideration; it’s a necessity.

The traditional hub-and-spoke model has served healthcare well; however, modern high-volume data exchange demands more advanced approaches. And one such approach is service bus architecture, which distributes processing loads more efficiently. The second microservice approach provides the flexibility required to scale individual components based on demand patterns.

In addition to this, asynchronous processing is a boon to organizations struggling with EHR performance tuning. This approach shows its immense potential when it comes to handling complex medical images or genomic data sets that might clog the conventional synchronous interfaces.

As for when you want to implement event-driven architectures, there is no better option than the public-subscribe patterns. It is able to notify multiple systems simultaneously when a patient’s lab results arrive, significantly improving medical data processing speed without creating integration bottlenecks.

Of course, healthcare data availability isn’t optional. High-availability configurations with intelligent failover clustering ensure that critical data remains accessible during hardware failures or maintenance windows. As for a larger health system, geographic distribution provides a more reliable shield against regional disruptions.

You must keep in mind that most successful healthcare organizations are those that optimize the healthcare interface, not for today but for tomorrow’s innovations. Furthermore, with disaster recovery abilities, these organizations further secure their systems.

High Availability & Disaster Recovery

To enhance the performance of your EHR integration and interoperability bridge is high availability and disaster recovery, where you have to ensure your system remains operational even during failure. Some of the key strategies:

- Redundancy: Multiple services/instances

- Failover systems: Automatic switch to backup systems

- Data Replication: Across regions and data centers

- Load Balancing: Distributing traffic evenly

This matters because, when your system experiences a downtime, your patient care aspects stay safe along with their data.

API-First Architecture for Scalable Clinical Data Exchange

This is another way of optimizing your medical data exchanges practices as systems deigned around APIs as their primary interface for communication enables seamless integration across apps, devices and platforms. The major advantage of this is faster integration with third-party systems, while supporting real-time data exchange and most importantly improves scalability and developer productivity.

Database and Storage Optimization Techniques

Ever feel like your systems are moving in slow motion despite having cutting-edge technology? If yes, then you need to manage your data more efficiently and optimize the flow to make it more seamless.

This is where healthcare data throughput comes into play. When patient information moves slowly, then everything from data analysis to discharge suffers. However, implementing smart index optimization can dramatically speed up these processes by strategically arranging the indexes and carefully structuring database queries.

Another method to manage data better is to consider database partitioning for those insanely large datasets. And I’ve seen many organizations transform their response time tremendously just by splitting patient data across data ranges or various departments.

Paying attention to your storage capacity also pays off significantly, as using SSDs improves the processing speed considerably. In all of this, you must not forget the database server configuration. Memory allocation, connection pooling, and buffer sizes all impact how efficiently your system handles requests during peak hours.

When you keep your historical data well-organized with smart archiving strategies, you keep your active database lean while also complying with retention regulations. This orderly management of legacy data can be way more substantial than you can imagine.

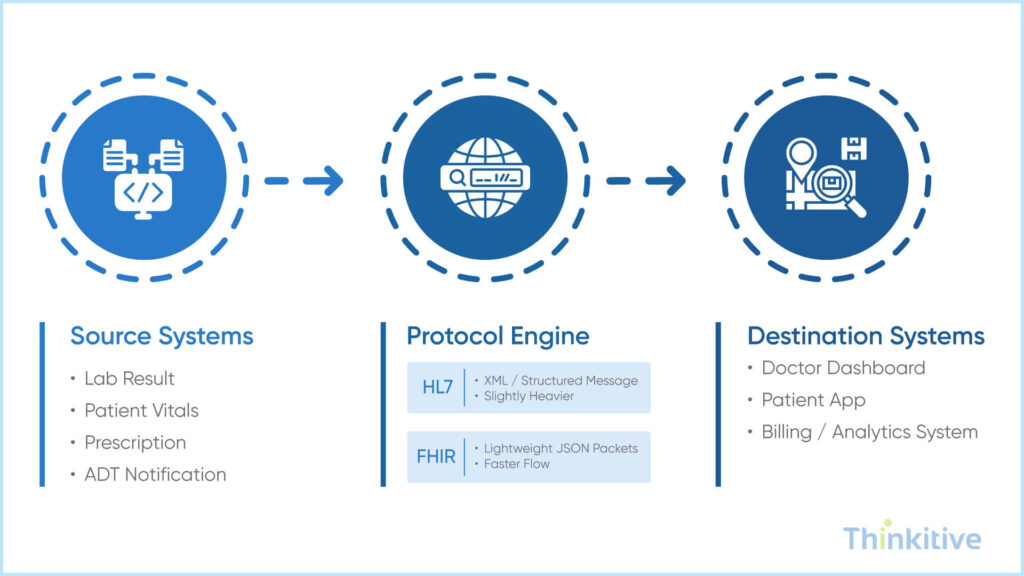

Message Processing and Protocol Handling

When the data is shared quickly, if your processing and transformation capabilities are lacking, it can delay all other tasks and operations. So, let’s look into some of the best practices to optimize your healthcare data throughput without compromising reliability.

The first is to choose between HL7 and FHIR, and while choosing, do not only consider the standard compliance, but also the performance characteristics. While HL7 is more stable, the FHIR RESTful approach gives you a faster medical data processing speed required to survive in high-speed data exchange.

To give you a clear differentiation between HL7 and FHIR, then HL7 standard is message-based and often uses delimited and XML formats. Moreover, heavier parsing helps in transformation overhead. The best case scenario for HL7 is better suited for batch-style or legacy integrations.

On the other hand, FHIR standards are API-driven which typically uses lightweight JSON. With these standards, data retrieval is faster and integration becomes easier. And the best suited for real-time and high performance data exchanges.

In a jist, FHIR offers speed and scalability, while HL7 remains common in legacy-heavy environment.

Not just the standards, but the formats are also a significant factor. JSON typically offers lighter parsing overhead than XML. And this can significantly impact EHR performance tuning efforts when millions of messages are sent or received.

Smart transformation optimization makes all the difference in high-volume data exchange scenarios:

- Implement pre-computed lookups for common code translations

- Leverage caching for frequently accessed reference data

- Fine-tune your transformation engine’s memory allocation

Apart from all of this, thoughtful batch processing can be the biggest lever to elevate your system’s performance. When the batches are of the right size, they balance throughput with responsiveness. Whereas too large batches cause latency, and too small batches waste processing cycles.

Monitoring and Proactive Management

Simply building the integration network is not enough in today’s fast-paced digital environment. Constantly monitoring it for problems or performance issues is also critical.

The first tool that makes this easier is having intuitive dashboards. These dashboards let your team easily understand the system’s vitals instead of finding the right in an unstructured and non-formatted data. It makes identifying issues at the right time much easier, as they can spot issues in minutes instead of hours.

But when you bring in proactive management, it takes monitoring a step ahead. You can set intelligent alerting thresholds and give your system the ability to raise its hand before things go south. However, the real game-changer is predictive analytics. By analyzing trends and capacity planning, you can prepare for growth before it overwhelms you.

Machine learning algorithms can also detect those subtle anomalies that a human eye might miss. These systems learn and become more intelligent over time, predicting peak loads with remarkable accuracy.

Automated intervention strategies kick in when things get busy and direct the high-load traffic towards less congested paths. This frees the system and lets it perform at its best even during the rush hours rather than breaking under pressure.

The impact monitoring has on healthcare practices is that they allow for changing your approach from reactive to proactive. On top of that, with continuous monitoring you can ensure that your system is consistently performing at its finest capabilities and the reliability of the system can be ensured.

Testing and Validation of Exchange Capabilities

Healthcare systems need to be properly and rigorously tested before going live. Because if the system lags or stops working in between or when the provider is making split-second decisions, it could impact care outcomes.

This is why you need comprehensive performance testing. Doing load testing shows you how the system will handle typical Tuesday morning traffic. Whereas stress testing pushes boundaries to find where things can break and endurance testing tests if the system can maintain performance over days and weeks without degradation.

But using real patient data is not safe and practical; this is where synthetic data plays its part. You can easily create realistic datasets that mirror the volume, variety, and velocity of actual healthcare transactions without privacy concerns.

Continuous monitoring through automated performance tests catches issues before users do. Regular regression testing ensures new features don’t compromise existing performance. And performance SLA monitoring provides the accountability backbone your stakeholders require.

Last but not least, by developing automated testing pipelines, all the continuous exchange validations can be done effectively and the processes are ensured to be moving without any manual interruptions.

Modern Healthcare Data Volume Challenges

EHR systems today must process more than just clinical notes, and the fact that AI/ML workloads now demand real-time access to structured and unstructured data for predictive modeling, requiring low-latency pipelines and GPU-optimized infrastructure. Genomics and precision medicine introduce massive, high-complexity datasets that strain storage and processing layers, making parallel processing and data compression critical.

The integration of IoT and medical devices further adds high-frequency data streams, requiring edge processing, buffering strategies, and lightweight protocols (like MQTT) to maintain throughput and reliability. At the population level, big data analytics platforms must support distributed computing, data lake architectures, and fast query engines to surface insights across millions of patient records—all without compromising system speed or uptime.

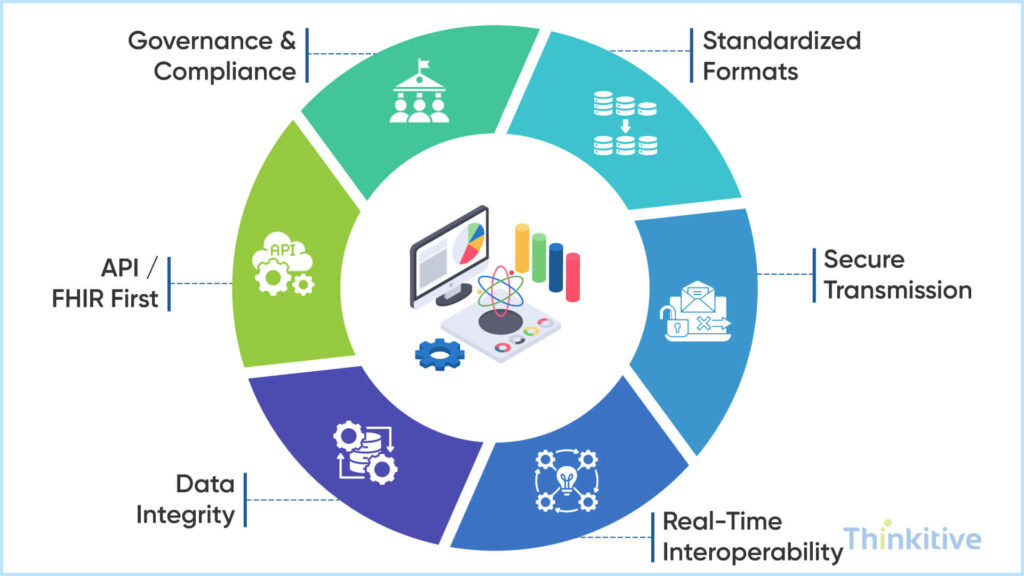

Best Practices for Medical Data Exchange

Believe it or not but your practice’s clinical and administrative aspects heavily depend on medical data exchanges. That is why you should pay special attention to it. On that note, here are some of the best practices that you can adopt:

- Standardizing Data Formats & Protocols: Use standardized data formats and protocols for storing data. This is crucial because it makes it easier for your system to understand data, make sense of it and easily convert into understandable format.

- Ensuring Secure Transmission (HTTPS, SFTP): Use secure data transmission protocols like HTTPS, SFTP.

- Designing for Real-Time Interoperability: When designing the architecture and interoperability bridge, design it for real-time data exchange, since most of your practice aspects depend on real-time data transfer.

- Maintaining Data Integrity & Validation: Maintain data integrity and validate data before training your system. This way you can ensure that the system is working efficiently without much disruptions.

- API-first & FHIR-first Strategies: Use API-first and FHIR-first strategies so that integration becomes easier and real-time data exchange is also enabled.

- Governance, Auditing & Compliance Framework: Adhering to the governing bodies can be your blueprint for building a compliance framework. And make auditing a recurring practice so that you are aware of everything that is happening in your system.

Conclusion

Healthcare integration, performance isn’t just about speed; it’s about saving lives. The strategies we’ve explored today—from caching and load balancing to asynchronous processing—create systems that don’t just work, but excel when it matters most.

The real magic happens when we balance blazing performance with rock-solid reliability and rich functionality. This isn’t an either/or scenario—it’s about smart trade-offs that support your specific clinical workflows.

By shifting from reactive firefighting to proactive performance management, you’ll stay ahead of issues before they impact care delivery. And with AI-powered analytics and edge computing on the horizon, the performance bar keeps rising.

Don’t wait for your next system slowdown to act. Schedule a performance assessment today by clicking here, and give yourself an integration that performs smoothly and brilliantly.

Frequently Asked Questions

Medical data exchange refers to the secure transfer of patient information—such as medical history, lab results, and prescriptions—across different healthcare systems and providers. It plays a critical role in enabling healthcare interoperability, ensuring that data can be accessed, shared, and used effectively across the care continuum.

Understanding how medical data exchange works in healthcare systems involves looking at how data is transmitted using standardized formats and protocols. Systems communicate through APIs, messaging standards, and networks like health information exchange platforms, allowing seamless clinical data exchange between EHRs, labs, and other healthcare entities in real time or batch modes.

The difference between direct vs query-based medical data exchange lies in how data is shared:

- Direct exchange: Data is pushed from one provider to another (e.g., sending patient records to a specialist).

- Query-based exchange: A provider searches and retrieves patient data from external systems when needed.

Both approaches are essential for efficient healthcare data exchange, depending on the use case.

The most widely used medical data exchange protocols include:

- HL7 – Traditional messaging standard for healthcare systems

- FHIR – Modern, API-based standard for real-time data exchange

- DICOM – Used for medical imaging data

These protocols enable structured and secure clinical data exchange across healthcare environments.

FHIR is becoming the preferred standard because it supports API-driven, real-time medical data exchange using lightweight formats like JSON. It simplifies integration, improves scalability, and enhances healthcare interoperability, making it ideal for modern digital health ecosystems.

A health information exchange (HIE) acts as a centralized or federated network that enables different healthcare organizations to share patient data securely. It facilitates seamless healthcare data exchange, improves care coordination, reduces duplication of tests, and ensures that providers have access to complete patient information.

Some key best practices for medical data exchange include:

- Using standardized protocols like FHIR and HL7

- Implementing strong encryption and access controls

- Ensuring compliance with healthcare regulations (e.g., HIPAA)

- Monitoring systems for anomalies and performance issues

- Using secure APIs for clinical data exchange

These practices ensure both security and reliability in healthcare systems.

AI can significantly enhance medical data exchange by automating data mapping, detecting anomalies, and optimizing data routing. It enables predictive analytics for load management, improves system performance, and supports intelligent decision-making in healthcare interoperability. This makes large-scale healthcare data exchange faster, more efficient, and more reliable.